Suppose, the model was trained using two highly positively-correlated features x1 and x2 (left plot on the illustration below). The possible explanation for this is the model’s extrapolation. (2010) investigates these claims of feature preference in a large scale simulation study and again find that the OOB measures overestimate the importance of correlated predictors. Archer and Kimes (2008) explore a similar set-up and also note improved performance when true features - those actually related to the response - are uncorrelated with noise features.(2007) investigate classification and note that the importance measures based on permuted out-of-bag (OOB) error in CART built trees are biased toward features that are correlated with other features and/or have many categories and further suggest that bootstrapping exaggerates these effects Giles Hooker and Lucas Mentch combined them in their paper “ Please Stop Permuting Features An Explanation and Alternatives”: While being a very attractive choice for model interpretation, permutation importance has several problems, especially when working with correlated features. For those reasons, permutation importance is wildly applied in many machine learning pipelines. Also, permutation importance allows you to select features: if the score on the permuted dataset is higher then on normal - it’s a clear sign to remove the feature and retrain a model. Although calculation requires to make predictions on training data n_featurs times, it’s not a substantial operation, compared to model retraining or precise SHAP values calculation. Permutation importance is easy to explain, implement, and use.

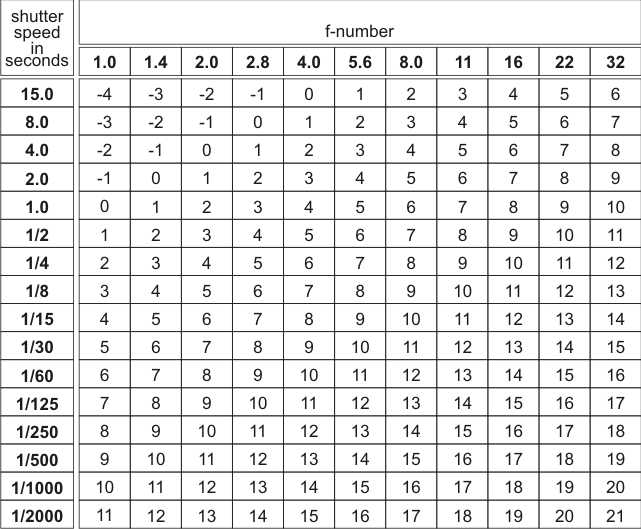

The process is repeated several times to reduce the influence of random permutations and scores or ranks are averaged across runs.Ĭode snippet to illustrate the calculations:.Lower the delta - the more important the feature. To calculate permutation importance for each feature feature_i, do the following: (1) permute feature_i values in the training dataset while keeping all other features “as is” - X_train_permuted (2) make predictions using X_train_permuted and previously trained model - y_hat_permuted (3) calculate the score on the permuted dataset - score_permuted (4) The importance of the feature is equal to score_permuted - score.Make predictions for a training dataset X_train - y_hat and calculate the score - score (higher score = better).Train model with training data X_train, y_train.It is calculated with several straightforward steps. It shows the drop in the score if the feature would be replaced with randomly permuted values. Permutation importance is a frequently used type of feature importance. I will show that in some cases, permutation importance gives wrong, misleading results. In this post, I’d like to address a bias of over-using permutation importance for finding the influencing features. Indeed, if one could run “pip install lib, lib.explain(model)”, why bother on the theory behind? The availability and simplicity of the methods are making them “golden hammer”. ( Interpreting Interpretability: Understanding Data Scientists’ Use of Interpretability Tools for Machine Learning) showed that not all data scientists know how to do it correctly. While others are “universal”, they could be applied to almost any model: methods such as SHAP values, permutation importances, drop-and-relearn approach, and many others.Īlthough the model’s black box unboxing is an integral part of the model development pipeline, a study conducted by Harmanpreet et al. Some of them are based on the model’s type, e.g., coefficients of linear regression, gain importance in tree-based models, or batch norm parameters in neural nets (BN params are often used for NN pruning, i.e., neural network compression for example, this paper addresses CNN nets, but the same logic could be applicable to fully-connected nets). There are a lot of ways how we could calculate feature importance nowadays. Indeed, the model’s top important features may give us inspiration for further feature engineering and provide insights on what is going on. Also, importance is frequently using for understanding the underlying process and making business decisions. Importances could help us to understand if we have biases in our data or bugs in models. Data scientists need features importances calculations for a variety of tasks.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed